While the "fail fast" ethos is good for innovation, performing analysis as part of your project's planning phase can help you understand risks before they materialize and then plan to avoid them.

Lean Startup philosophy holds that innovators should not spend a lot of time getting something perfect on the first try. Instead, product pioneers achieve finished, feature-complete product designs much faster if they fail quickly, learn from the failure, and then iterate.

Unfortunately, some failures are permanent. Sometimes innovators don't fail fast enough, or they fail too often, or they fail during the wrong stage of the project—all of this is borne out by the fact that 14% of all IT projects fail permanently.

On the surface, 14% doesn't seem like a huge number, but it means that businesses collectively waste $97 million for every $1 billion they invest in improvements. What's more, the impact of failure is not discussed - the project that fails may well have been the one project that would modernized your company, improve your customer experience, and make the difference between success and failure as an organization. In short, it's best to get your failure rate as close to zero as possible.

To understand how failure can get out of hand and to learn how to prevent failure from finalizing your projects, we will introduce a concept known as Failure Mode and Effects Analysis (FMEA). FMEA was originally intended to be a manufacturing discipline, but its scope has been broadened to apply to other industries, and every phase of the product life cycle from concept to production.

In a nutshell, FMEA starts by asking:

There are two ways to understand failure modes. One way is to conduct a risk assessment. The other way is to conduct a project assessment, analyzing the qualitative and quantitative aspects of the project itself. It's best to do both.

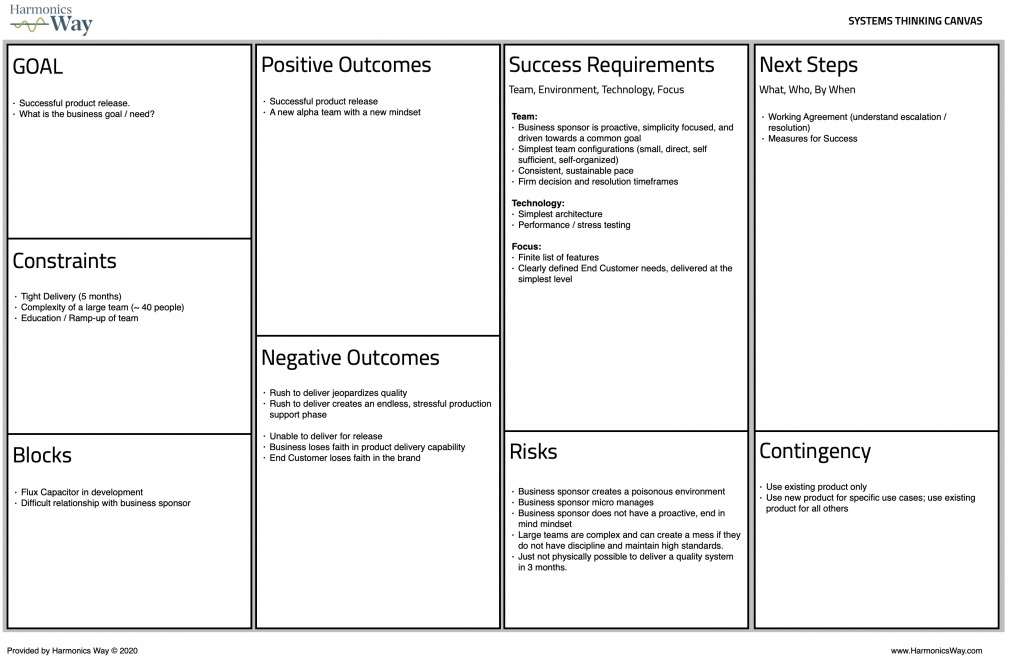

The first step is to focus on the project's concrete, measurable goals like virtualizing a server, increasing website visitors, or launching a new product. Next, consider the constraints on achieving those goals - usually along the lines of time, budget, and human resources. Finally, consider the potential blocks - the things that concretely may lead to failure or stand in the way of success.

In the example above, you can see how the risk assessment framework allows clients to organize their success requirements and inherent risks. Every project has both positive and negative outcomes.

To achieve positive outcomes, consider the success requirements that address each of the potential negative outcomes. For example, if the rush to deliver jeopardizes quality, then implement the simplest possible product while ensuring that you test thoroughly. Any risks that you can't address should have a contingency plan.

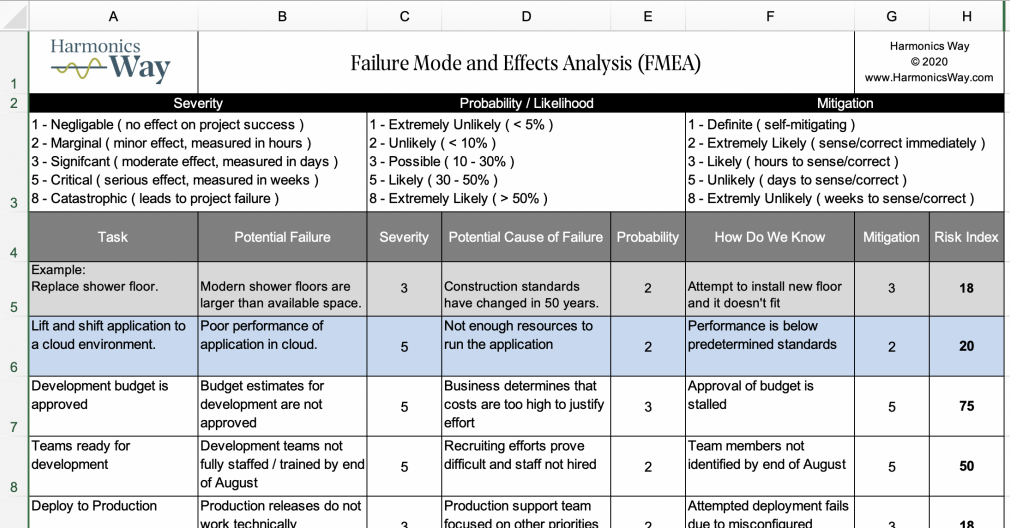

A project assessment starts by breaking a project down into a series of tasks. Each task has a way it can potentially fail. Once the failure mode is identified, a severity gets assigned. The severity value is based on the impact the failure would have on total project success. Next, a probability value is assigned. This value represents the probability or likelihood that the failure would actually occur. Finally, a mitigation value is assigned. This value represents the likelihood of being able to detect and correct the potential failure. Each of these value assignments is a weighted numeric score, based on the Fibonacci sequence, one through eight. Using the Fibonacci sequence of 1, 2, 3, 5, and 8, keeps the evaluators from manipulating the score with intermediate values. You want to know when a potential failure will affect your project’s success, and using a larger number will highlight that failure point.

Let's say your task is to lift and shift an application to the cloud. Put that in the first box. The second box describes a failure mode: let's say that the application might perform poorly in a cloud environment. This failure is a severe issue, so let's rank that a five.

Now let's move to the cause of failure. One cause of failure is that the application is under-resourced in its new environment. Since the application in our example is a bit newer, there's a lower probability of poor performance—call it a two. Lastly, how likely is it that we'll be able to detect and fix the issue? Well, you'll know when the application doesn't meet expected standards, and you'll probably be able to fix it by allocating more resources, so let's call that a two as well.

In this analysis model, we judge the overall risk of failure by multiplying the numbers horizontally across columns. Any project with a risk index greater than 30 needs particular attention, but 5*2*2=20. There's a comparatively low likelihood that the project will fail in the manner described. Repeat this process for each task of your project.

The Harmonics Way point of view is that while the "fail fast" ethos is good for innovation, it's best to bake in quality from the start. By performing analysis as part of your project's planning phase, you can identify and understand risks before they materialize and then make a plan to avoid them. Although you still have the opportunity to fail and iterate, your iterations are that much faster, and you can sidestep risks that have the potential to derail your project entirely (as opposed to merely creating a learning experience).

The Harmonics Way Systems Thinking Canvas [Download PDF]

The FMEA Worksheet [Download Excel Worksheet]